Images are captured using streamer. Continously, it captures 1000 JPEGs to individual files numbered 000 to 999 at (say) 10 frames per second. inotifywait detects these files being closed, and optionally delivers them to jpegtran to rotate them 180° if necessary.

To serve on the Web, a CGI script uses

inotify to detect the

potentially rotated images, and generates a

never-ending multipart sequence of JPEGs. A native C

program is used to avoid calling subprocesses like

inotifywait, which get left behind when

the client terminates the connection, because Apache

sends SIGKILL to terminate the

CGI script.

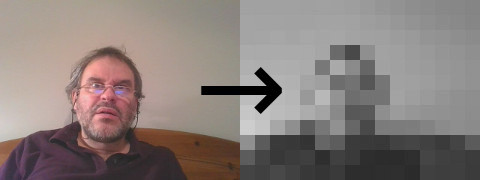

For the motion detection, inotifywait is used once again to pick up the latest images, then each image is reduced by ImageMagick convert, and submitted to a small C program.